Sunday, November 16, 2008

Run it or rip it - UPDATED!

NeoGaf has posted this link ( http://www.neogaf.com/forum/showpost.php?p=13630060&postcount=189 ) where they tested Halo 3 with the install and without. This drives my point exactly. When a game is built with ability to adapt to hardware (no HDD or HDD), you get a much better game. There are some cases shown here where the game actually ran slower if you copied to the HDD, because they are already doing some rather intelligent caching.

So thats my rant for the day. Careful what you wish for, and please don't make me wait to play!?!?!

Saturday, November 1, 2008

Run it or rip it!

Lets get to some specifics (with the 360). The 360 has a maximum (best case scenario with a pristine disk) transfer rate of about 12 Mbps. Accessing the same data from HDD would be about 10x the speed. This makes loading the required assets much easier for the programmer. The 360 has only 384 MB of system memory, so there is alot of loading from disk/DVD. To get around this programmers have devised complex caching and prediction algorithms to be sure the user is not stalled. In some cases, companies have chose to load limited assets based on whether the user has a HDD or not, as items are cached there. Note currently no company on the 360 for a retail game loads the entire game there, just individual assets (think cache).

A recent post ( http://www.joystiq.com/2008/10/31/xbox-360-load-time-comparison-dvd-vs-hard-drive/ ) has shown the upside. Quieter system and slightly faster load times. They have shown the transfer rate to be about 1.7GB per minute (so they are seeing about 28 Mbps transfer on a file copy). For an 8GB game this would leave the user sitting (best case) slightly under 5 minutes.

I am not sure this is good thing. What I would like to see if the strategy that Games for Windows uses. This is give the user the option when the put the disk in the first time. To wait or not to wait. If they chose not to wait, load only the require level (lets say for arguments sake 1 GB of data), which would take less than a minute (show some screenshots or something in between ;) ). Then predict the next, lets say level, to be loaded and on idle cycles in the game, copy the files ( of course giving priority to the action rendering and game code if they need the resources). This a lazy loading pattern used in programming for many different types of applications.

I would hope that the hardware engineers are thinking about this for the next gen game consoles to allow the movement of assets from optical to hdd. Ultimately download games would be great, no more copy, but there are costs with this as well. HDDs would have to be much larger (hundreds of GBs or TB) and this is big cost to add to selling a console. Anyway, just my thoughts.

Friday, August 15, 2008

Post Mortem: Advances in Real-Time Rendering Part 1

Global Illumination

The discussion about global illumination was headed by Hao Chen, lead graphics software architect at Bungie.

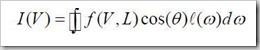

The idea of using global illumination in real-time applications has become a very important concept in new games. If the surface does not emit any light (emissive) the formula used to calculate global illumination is listed.

This formula assumes a BRDF (f) has already been calculated. This presented the Halo3 engineers with 2 challenges. The first was that while this formula can be solved for a small amount of simple lights (point lights for instance), it is not feasible to solve in real-time as would be needed by the engine. The second is driven by the fact that Halo contains many different types of surfaces (shiny, dull, etc). The Phong BRDF model has been used in interactive, real-time environments but again this was only with a small number of point lights. Also, the engineers did not feel that the Phong model would capture the detail they were looking for.

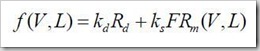

So the approach taken was to rely on the CookTorrance BRDF model. The system created could rely on other models, if others were found to be more accurate.

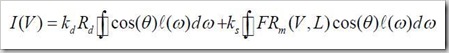

So the final rendering equation would then be:

There is yet another problem. The diffuse and specular in the above listed equation involve an integral that is quite expensive to calculate. If the lights were simple point lights this would probably not be an issue, but as we are using a different type of light source it cannot be used as above. So the team turned to SH (spherical harmonics).

Specifically, there are 2 cases (diffuse and specular lights). For diffuse lighting there are shadowed and unshadowed cases that need to be calculated. Unshadowed diffuse can be calculated in the shader using a quadratic polynomial approximation. Shadowed diffuse can use pre-computed radiance transfer method result combined (as a dot product) with the incoming light. There is still one remaining issue to calculate accurate diffuse lighting. The equation encodes the incident radiance as a single point. This is not accurate when using to light an entire scene. Usually, to solve this various random samples are chosen and interpolation used to fill in the rest. This will give ok results on small areas but not on big scenes. The other strategy, and one chose here, is to build light maps and grid the scene (and add sample points per cell). In Halo3, the choice was made to use a photon mapper to "bake" the incident radiance into these light maps. This is an offline process.

The specular reflectance was much harder to calculate. The problem is glossy surfaces contain a full range of frequencies. The choice was made here to break this down to 3 frequencies (low, mid, high). The high is calculated in the shader (BRDF), the middle frequencies are handled by cube maps, and lows are handled by BRDF again (which is parameterized).

REFERENCES:

[BASRIJACOBS03] BASRI, R., AND JACOBS, D. W. 2003. Lambertian reflectance and

linear subspaces. IEEE Trans. Pattern Anal. Mach. Intell. 25, 2, pp. 218–

233.

[BLINN77] BLINN, J. F. 1977. Models of light reflection for computer synthesized

pictures. ACM SIGGRAPH Comput. Graph. 11, 2, pp. 192–198.

[CHEN08] CHEN, H. Lighting and materials of Halo 3. Game Developers

Conference, 2008.

[COOKTORRANCE81] COOK, R. L., AND TORRANCE, K. E. 1981. A reflectance model

for computer graphics. In Proceedings of ACM SIGGRAPH 1981, pp. 307–

316. [GOODTAYLOR05] GOOD, O., AND TAYLOR, Z. 2005. Optimized photon tracing using

spherical harmonic light maps. In Proceedings of ACM SIGGRAPH 2005,

Technical Sketches, p. 53.

[GSHG98] GREGER, G., SHIRLEY, P., HUBBARD, P. M., AND GREENBERG, D. P. 1998.

The irradiance volume. IEEE Comput. Graph. Appl. 18, 2, pp. 32–43.

[HUWANG08] HU, Y., AND WANG, X. Lightmap compression in Halo 3. Game

Developers Conference, 2008.

[ICG86] IMMEL, D. S., COHEN, M. F., AND GREENBERG, D. P. 1986. A radiosity

method for non-diffuse environments. ACM SIGGRAPH Comput. Graph.

20, 4, pp. 133–142.

[KAJIYA86] KAJIYA, J. T. 1986. The rendering equation. In Proceedings of ACM

SIGGRAPH 1986, pp. 143–150.

[KSS02] KAUTZ, J., SLOAN, P.-P., AND SNYDER, J. 2002. Fast, arbitrary brdf shading

for low-frequency lighting using spherical harmonics. In Proceedings of the

13th Eurographics workshop on Rendering 2002, pp. 291–296.

[NDM05] NGAN, A., DURAND, F., AND MATUSIK, W. 2005. Experimental analysis of

brdf models. In Proceedings of the Eurographics Symposium on Rendering

2005, pp. 117–226.

[OAT05] OAT, C. Irradiance Volumes for Games, Game Developers Conference,

2005. http://ati.amd.com/developer/gdc/GDC2005_PracticalPRT.pdf

[PSS99] PREETHAM, A.J., SHIRLEY, P. AND SMITS, B. 1999. A Practical Analytic

Model for Daylight, In Proceedings of Siggraph 1999, pp. 91 – 100, Los

Angeles, CA.

[RAMAMOORTHIHANRAHAN01] RAMAMOORTHI, R., AND HANRAHAN, P. 2001. An efficient

representation for irradiance environment maps. In Proceedings of ACM

SIGGRAPH 2001, pp. 497–500.

[RAMAMOORTHIHANRAHAN01B] RAMAMOORTHI, R., AND HANRAHAN, P. 2001. On the

relationship between radiance and irradiance: Determining the illumination

from images of a convex Lambertian object. Journal of the Optical Society

of America, Vol. 18, 10, pp. 2448–2459. [RAMAMOORTHIHANRAHAN02] RAMAMOORTHI, R., AND HANRAHAN, P. 2002. Frequency

space environment map rendering. In Proceedings of ACM SIGGRAPH

2002, 517–526.

[SCHLICK94] SCHLICK, C. 1994. An inexpensive BRDF model for physically-based

rendering. Computer Graphics Forums. 13, (3), 233–246.

[SLOANSNYDER02] SLOAN, P.-P., KAUTZ, J., AND SNYDER, J. 2002. Precomputed

radiance transfer for real-time rendering in dynamic, low frequency lighting

environments. ACM Trans. Graph. 21, 3, 527–536.

[SHHS03] SLOAN, P.-P., HALL, J., HART, J., AND SNYDER, J. 2003. Clustered

principal components for precomputed radiance transfer. ACM Trans.

Graph. 22, 3, 382–391.

[VILLEGASSEAN08] VILLEGAS, L., AND SEAN S. Life on the Bungie Farm: Fun Things

to Do with 180 Servers . Game Developers Conference, 2008.

Tuesday, June 17, 2008

Latest reads

Just dropping a line on a book that I am reading. It is focused on advanced .net debugging techniques and tools.

Friday, May 23, 2008

Something new

I have recently been spending some time learning LUA language. I am looking into this mainly as this is a standard language used by game developers for in game scripting. I picked up this book to aid in my studies. More to follow on this.

Wednesday, April 23, 2008

Elegant Memory Management Ideas

In working on a recent project, involving some callback data in a animation with DirectX and interesting solution to a common problem was presented. In order to describe the solution correctly, I must setup the scenario.

We have an animation that is loaded from say an X file with the DirectX API. When using the convenient function to load the X file (D3DXLoadMeshHierarchyFromX) the obvious problem is any callbacks you will require for animation sets will most likely not be stored in the X file. So we clone the controller/animation sets after the file has been loaded and allocate an array for callback keys/data.

The problem comes in about how to release this memory when complete. As the data stored here can really be whatever we like, it becomes harder to clean up (as its heap allocated).

The interesting solution that was shown to me was to use COM. Well, not exactly full COM but the IUknown interface. Basically we just ensure that our context data object derive from IUknown (and of course implement the required fields). One of the required methods is Release(). As with all COM objects, memory cleanup revolves around a reference count, and when all references are gone the object remove "itself" from memory. Also, note we will be required to implement AddRef and QueryInterface as part of derived interface.

Note we are registering a full COM object we are simply using the interface provided by COM as a reference counting mechanism to allow our objects to clean themselves up when it is required.

I thought this was a cool way to use some tried and true COM libraries to solve a very real problem. :)

Thursday, February 28, 2008

Building a Better Battle : Halo 3 AI

This presentation was given by Damian Isla from Bungie Studios. It presents the basic architecture and tools used to build the AI system in Halo 3. This lecture specifically detailed the encounter logic.

Encounters are the "dance". Basically how the system reacts and collapses in interesting ways. The "dance" is the illusion of strategic intelligence. Designers choreograph the "dance" to be interesting and drive the pacing of the story, kinda like a football coach directs his subjects.

Halo 3 uses a 2 stage fallback. Enemies start off occupying a territory. The aggressor (player) then pushes them back to fallback point. After this they are pushed to the last stand location, after which the player will "break" them and finish the battle. "Spice" is added on top of this by designers to make the encounter play out in a more realistic fashion.

Mission Designers handle the encounter tasks with the AI Engineers handling the squad (how the AI behaves autonomously).

Halo 2 used the Imperative Method to control AI (Finite State Machine). The designers were given access to dictate what happen as various events were triggered (ie. enemy starts losing battle). The primary problem with this model was the need for explicit transitions (n^2 complexity).

Halo 3 took a different approach, using the Declarative Method. This basically works by defining the end result you are looking for (with reference to AI). You enumerate the "tasks" that are available and let the system make the decision on how to perform these tasks.

One of cool things about using the declarative method is the ability to set relative priorities. Example would be guard the door but if you can't do this, then hallway. Also, it brings the notion of hierarchal tasks (sub-tasks). Example would be guarding the hallway means guarding both ends and middle.

Funny comment that Halo 3 AI works like a Plinko machine. This means pour tasks into the system, prioritize them, and then pour enemies in and let the system place them and control their behavior. This also means it's an upside down Plinko machine ;) This is because tasks can be activated/deactivated at will and cause the enemies performing them to re-evaluate the situation and "do something else".

The system uses a proprietary scripting language named HaloScript. This allows designers, who are not programmers, to design and use the system.

Thursday, February 21, 2008

Life on the Bungie Farm: Fun Things to Do with 180 servers and 350 processors

Advantages:

- Faster iterations -> more polished games

- Keeps complexity under control

Binary Builds (game and tools)

- Automated tests are run on tool builds only

Lightmap Rendering

- Pre-Compute Lighting in scenes (Photon Mapping and custom algoritms from Hao and crew)

- Bakes the level files (output)

Content Builds

- Compiles assets into monolythic files

Website (bungie.net) Builds

Patches (maintenance items for servers)

Halo 1 -> All assets processed by hand, very few automated tasks

Halo 2 -> More automation (3 servers in farm -> one for each function)

Halo 3 -> Unified systems into single extensible system

The latest iteration, created with Halo 3, did a few new things (rewrite).

- Unified codebases, implemented single cluster.

- One farm

- Updated code to .net (C#), easier to develop/maintain

Stats

- Over 11,000 builds (exe/dll)

- Over 9, 000 lightmap builds

- Over 28,000 other types of builds

- Halo 3 would not have shipped in current form without the farm.

Interface for users (developers)

- Had to be easy, simple with "one-button" submit operation

- Even if users are developers they still don't want to know what is going on behind the scenes

Architecture

- Single system/multiple workflows

- Plug in based

- Workflows divided into client / server plugins (isolation from each other)

- Server schedules jobs (messages clients)

- Client start jobs and sent status and results back to server

- Server manages state of jobs

- All communications via SQL Server

- Incremental builds be default

- Between continuous integration and scheduled (devs run builds ad-hoc and there is a scheduled nightly build)

Symbol Server used (Debugging Tools For Windows)

- Symbols registered on server

Source Stamping

- Linker setting for source location

- Set at compile time

- Engineers can attach to any client from any client as long as they have Visual Studio installed.

Lightmapper was written specifically for the farm

- Chunks job parts to clients

- Merges results

Simple SLB

- Min / Max configurable

- More clients used to support workload if clients are mostly idle

Cubemap farms

- Used XBoxes and PCs for rendering and assembly.

- Pools of Xbox Dev Kits

- No client code on Xbox

- Few changes for Xbox Support

Implementation Details

- All C# (.Net)

- Object serialized to XML to start but switch to binary serialization later (speed and mem benefits)

- Downsides (memory bottlenecks, forced GCs, should have been more careful with memory)

Beyond Printf: Debugging Graphics Through Tools

Main toolsets

- Windows - PIX

- Nvidia - FXTools

Use GPU & driver counters for basic performance related issues.

Shader Perf 2.0

- Test opt opportunity

- Integrated to FX Composer

- Regression analysis

- Its in Beta 2.0!

- SDK Available

FX Composer for authoring and debugging shaders.

Use PIX for:

- Game Assets (textures, shaders, vertex buffers, index buffers, etc)

- API (DirectX)

Use Nvidia tools for:

- Driver related items

- Hardware specific items

Link dump:

http://developer.nvidia.com/PerfKit

http://developer.nvidia.com/PerfHud

GPU Optimization with the Latest NVIDIA Performance Tools

Optimization Techniques

- System -> CPU to GPU and multithreading

- Application -> game code

- Microcode -> lowest level (tied very closely to hardware)

Optimizations should start at the higher level and work way down. Microcode optimizations can be benefital but will only work with certain configurations (good for consoles, not for PCs).

If GPU is > 90% utilization, should look for a GPU bottleneck first, if lower then CPU (app code, driver, etc) should be looked at.

PerfHud (tool from Nvidia).

- In version 6 of this tool, no special driver is needed (retail drivers have instrumenation hooks already in place).

- SLI Optimizations can be discovered with this tool

- API Call Data Mining (both in tool and export to own data analysis offline)

- Shader Visualization / Texture Visualization

- Hot key mapping to trigger user defined options (for debugging).

The demo shown was Marble Blast Ultra (pc version). There were optimizations shown.

Next demo was with Crysis.

- Programmers should start putting PerfMarkers in their code now. These will help later.

- API Time Graph is a new feature in beta

- Perf hints (single and SLI) are given

- Subtotals in Frame Profiler

- Break (_int3) on draw calls (new feature)

- Support for 32bit apps on 64bit OS (was not previously supported natively by driver)

There were some OpenGL tools discussed.

Environment Design in HALO 3

Typically there are artists, who manage geometry, and designers, who manage gameplay. Bungie has titled an architect as the "glue" between these two. This is the role Mike fills at Bungie (as well as a finishing artist).

Pre-production model

- Broad Timeline of level and game

- Napkin sketch

- Concept Art

- Whiteboard

Aspect 1 : The Hook

- Promenant features in the rooms

- Don't create mazes for player to get lost in

- Force orientation without using force :)

- Should obviously be easy to grasp and navigate

- Suggest a tactic by geometry, lighting, etc (no force)

Aspect 2 : Scale

- How large should the level "feel"

- Engagement ideas/planning

Aspect 3 : Combat Elements

- Fronts

- Layers

- AI Blinds

Aspect 4 : Movement Elements

- Player Shortcuts (make the player feel like he discovers ;))

- One-way paths

- Ninja paths

- Vehicle flows

Mike then shared some concept art, geometry and finishing art with us. Link to presentation will be posted next week.

Audio Post-Mortem: HALO 3

First off let me just say that in my opinion, Marty O'Donnell is one of the best composer/audio directors I have ever seen. He, and his team are very passionate about audio. It really inspires others to push as hard as they do for audio perfection.

Jay Weinland started the lecture by talking about how things changed from H2 to H3. Basically, the fundamental issue between the Xbox Gen1 and Xbox 360 was that the DSP audio chip was removed from the 360. Also, with Gen1, the HDD was guaranteed to be there, but with Xbox 360, HDD is optional. So the system has to work with both configurations. With HDD, its easier, stream everything from HDD and use a small amount of system mem (with Gen1, the audio chip was used, so literally a few MB of system mem was used). This basically meant that more programming was required to handle the audio on the 360. Matt Noguchi and help from Microsoft were employed with great success.

C Paul Johnson then spoke about LOD and how audio levels were set based on distance, which was new with the 360. Also, the algoritm developed took into account whether a HDD was attached or not, and audio is culled if its missing for a "degraded" but functional system. He also demonstrated how the looping works (to save space but randomize so it does not get boring for the user). This was talking about ambient sound primarily.

Marty then spoke about how he composes and tools used to do his magic. He demonstrated how he puts various clips together with in house created tools (Guerilla) and other tools.

E Pluribus Unum: Matchmaking in HALO 3

This was a pretty technical talk about the internals of the matchmaking process. Chris started by talking about why this is needed. The idea of user being matched in games that will be fun (not too easy or hard) with some way to hold the user and keep them playing.

The problem with H2 matchmaking was that is was based heavily on skill. The cheaters who had figured out ways to break the system created bad experiences for typical users.

With H3, the team worked with Microsoft (Research) to use the TrueSkill and integrate this into their matchmaking to make this process seamless and transparent to users. This is a pretty complex system based on a bayasien algorithm to compute the players real skill. This combined with some somewhat complex networking code pulls off the matchmaking we are now using with H3. Chris presented results with > 80% of all Halo users playing over 100 multiplayer games. This is a pretty substantial improvement over H2.

Problems with the new system are the complexity can confuse users (as they don't get to see the under the cover working going on, nor would most understand it).

I will post the slides when available (Feb 25th).

The profile of a Great Software Tester

Anibal has a very good type of analysis used to determine if a person fits as a good software tester. The attachment I will post will show this better. I will post an update when I get this.

Technical Issues in Tools Development (Roundtable Discussion)

Topics:

- Using reflection to help build GUI for tools

- 3rd Party tools / components used to build tools

- C# and managed code for tool building

Reflection with C++ and C is a bit harder to pull off (use header files) than something like C# or Java. This limits this approach for dynamic generation of gui based tools and plugins for IDEs.

Some of the studios still using C++ or C for tools development were commenting that technical artists hate (yes I said hate) most of the grids and property setters exposed by tools. Most of the time this is because artists (creative type) are looking for color pickers and such (nice gui's) and developers are more analytical (just numeric values will do). The tools typically created by tools developers fit better with developers.

Possible ways to fix this were discussed. One would be to adopt something like MVC pattern and expose different views based on user.

With regards to 3rd party tools, artists again are looking for more WYSIWYG interfaces, instead of the lower level interfaces they are given. The idea of creating debug versions of data structures as well as executables came up. The problem here is when problems only show in the release build (data structures) and are ok in debug build.

Bug databases are crucial and automation to fill these without intervention (or light intervention) from artists are an absolute requirement. Artists typically will just quit using or find workarounds if given broken tools.

If using managed code, Sony Online mentioned they were using Cruise Control .Net but does not have it fully working yet (automate builds done but not functional tests).

Many studios are adapting Agile software development tactics (like daily calls about projects / issues).

Automated logging came up, with log4net pushed as a very good tool.

Maybe 60% of the studios in attendance (including Sony Online, Bioware, Pandemic, Microsoft Games Studios, 2K, EA) are currently using C# and managed code to build tool sets (at some level).

The ones still using C or C++ were doing so for richer data when crashes happened. Also, these studios were happy with what C# could do, but typically they have to use what is in place, with not alot of time to rewrite everything with a new language.

The idea of standardizing on languages (scripting, tools, engine) was discussed. John pointed out that its almost obsurd to try this. Developers use what they are familar with to get the job done. Whether is Ruby, Java, .Net, C, C++, Lua, Perl or whatever else. The one key point was not to be writing your own scripting engines, which is where some studios went. This becomes more of a computer science project than a functional piece of software. Standardization on a variety of languages (common used, non-proprietary) is required. Key point, make all tools dump data in common format (XML)! Embrace the chaos, don't try to eliminate!

Technical Consulting Engineer, Intel C++ Compilers (Sponsored by Intel)

Wednesday, February 20, 2008

Running Halo3 without a Hard Drive:Presentation by Matt Noguchi

- Current Next Gen Games are IO bound.

- DVD drive supports about 12MB/s transfer rate

The key item impressed by Matt was to minimize seeks. When using hdd, the problem is much less pronounced, but with systems that might not have hdd, the system must work with at least just DVD drive.

Break the levels down to required and optional resources.

- Required resources -> blocking to load/synchronous

- Optional resources -> non-blocking to load / asynchronous

Sound assets are huge issue (level 5 in H3 has 566MB of sound assets alone)

- Cannot stream sound from DVD (only HDD)

- Solution is to cut out AI dialogue when on DVD only (limited experience)

- With HDD, stream everything (speed much less issue)

Break up levels into zone sets with transitional volumes created by designers. Trigger volumes will (pre-cache) assets required as need (and evict non-required ones). Bungie had to limit objects used in each zone set (to keep under mem limit of ~334.8MB).

Next problems, thread contention (game thread/render thread). Solution was to provide a further abstraction from resources as the streaming IO is loading/evicting resources (use another cache).

Last optimization was layout of items on disk. Global or shared items (used in most levels) should be written to DVD at same location to allow sequential IO (much faster). All games assets in H3 totalled 15GB, but after optimization and culling were under 7GB.

Monday, February 18, 2008

Tuesday, February 12, 2008

GDC 2008 Agenda

Thursday, January 3, 2008

New GC Feature in Framework 2.0 SP1

There are a bevy of new functionality added with SP1 for .NET Framework 2.0, but one of particular interest to me was the changed to the garbage collector. There are 2 major changes that I will discuss.

GC Collection Modes

There now exists a new overload to the GC.Collect method. The new method signature is void System.GC.Collect(int generation, System.GCCollectionMode mode). The generation parameter can be 0 to System.GC.MaxGeneration (usually 2 is upper limit on server GC). The mode parameter can be Default, Forced or Optimized.

- Default - same as if you call System.GC.Collect with no parameters

- Forced - guarantees collection of all generations (currently this is the same as Default)

- Optimized - tells GC to only collect if it is determined to be "productive"

GC Latency Modes

A new property has been added to the Runtime help in controlling the GC. The new property signature is System.Runtime.GCLatencyMode System.Runtime.GCSettings.LatencyMode { get; set; }. Follwing explains the differences.

- Batch - allow GC to work with maximum throughput at the cost of responsiveness

- Interactive - allows the GC to be balanced between max throughput and max responsiveness

- LowLatency - allows the GC to be "stalled", useful when responsiveness or CPU load is needed by the application and GC should not interrupt.